From Ill-Posed to Commoditized: Where Agent Commerce Sits on the Maturation Curve

Most AI agent projects fail at the same invisible wall. The team builds something that works in demos, strings together a few API calls, watches the agent complete a task end-to-end, and declares success. Then they try to hand it to real users and discover the agent works 60% of the time. So they add more prompt engineering. It climbs to 70%. They add retries. Maybe 75%. And then it stops improving, because the problem was never the prompts.

The problem is that they skipped the measurement layer. You cannot optimize what you cannot measure, and most agent projects never build the scaffolding to measure anything. This is not a technical failure. It is a maturity failure. And there is a specific, predictable sequence you have to follow to get out of it.

The Six Levels of Agent Maturity

The SolveEverything framework describes a six-level maturation curve that any cognitive domain moves through as it industrializes. It applies to accounting software, radiology, legal research, and now AI agents. The levels are L0 through L5, and each one has a specific graduation requirement. Time alone does not move you up. Specific work does.

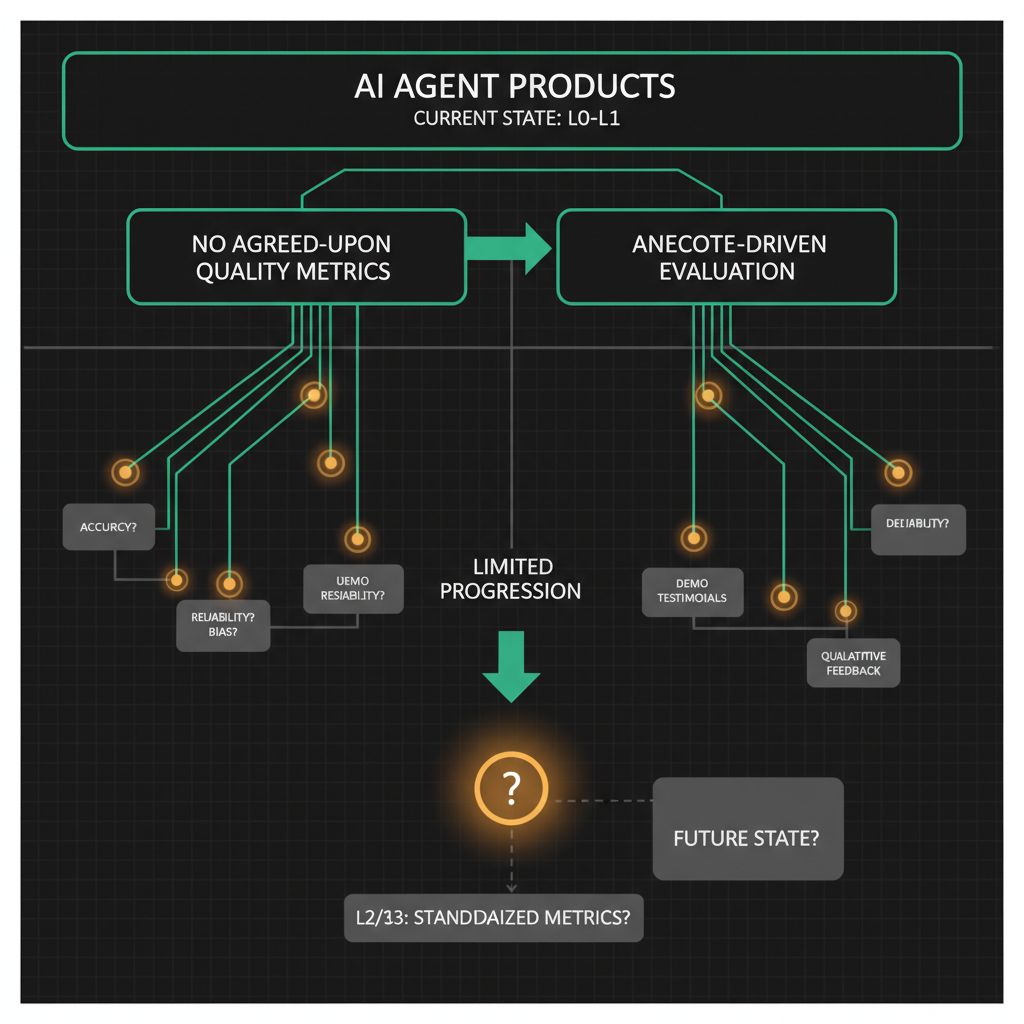

L0: Ill-Posed. The task exists but nobody agrees on what success looks like. Evaluation is vibes-based. "The agent felt helpful" is the best metric available. Most agent products launched in 2023 and 2024 are here. The demos are compelling. The production reliability is not.

L1: Measurable. Someone has defined what good looks like. There are benchmarks, even crude ones. You can run the agent 100 times and produce a number: task completion rate, error rate, latency at the 95th percentile. The number might be low. That is fine. You now have a target.

L2: Repeatable. The agent hits its quality bar consistently across different inputs, different times of day, different edge cases. Standard operating procedures are documented. The SDK or infrastructure handles the complexity that used to require heroics. Developers can hand the system to another developer without a three-hour knowledge transfer.

L3: Automated. The agent handles roughly 80% of cases without human intervention. Humans handle exceptions, edge cases, and failures. The system knows when to escalate. Monitoring catches regressions before users do. Some verticals are already here in 2025.

L4: Industrialized. Multiple agents interact. The system has governance, audit trails, and payment rails. You can run it at scale without the founding team babysitting it. Pricing is outcome-based because the outcomes are reliable enough to price that way.

L5: Commoditized. The capability is cheap enough that it stops being a competitive differentiator. Everyone has access to it. The value moves up the stack to whoever controls the workflow or the data.

Where the Agent Commerce Ecosystem Actually Sits

Here is an honest map as of mid-2025. Most of the agent commerce space is at L0 or L1. This is not a criticism. It is a stage. But it is worth being specific about what that means in practice.

At L0, you see agent projects where the quality evaluation is entirely anecdote-driven. A founder says "our agent handles customer service." You ask: what is your task completion rate? They say: "users seem happy." You ask: what is your escalation rate? They say: "we handle it case by case." That is L0. The task is real. The measurement infrastructure does not exist.

At L1, you see teams that have started counting things. They know their agent completes the stated task 68% of the time. They know the average latency is 4.2 seconds. They know that inputs longer than 800 tokens cause a disproportionate failure rate. They have a number. They do not yet have a repeatable process for improving it.

The infrastructure layer, tools like OneShot that give agents access to voice, email, SMS, and web research paid for with USDC via the x402 protocol, sits at L2. The procedures are documented. The OneShot SDK handles authentication, payment, retry logic, and error handling so developers do not have to rebuild that scaffolding. You can hand a new developer the SDK and they can make a working agent that sends emails and makes phone calls in under an hour. That is repeatability.

The vertical products built on top of that infrastructure are pushing into L3. Freebot, which fights corporate customer service on behalf of consumers by calling companies and navigating phone trees, has a measurable success metric: did the customer get their refund or resolution? It only charges when it wins. That outcome-based pricing is only possible because the task is measurable and the agent is reliable enough to stake money on. Freway, which recovers abandoned Shopify checkouts through multi-channel outreach, has a similarly hard success criterion: did the sale complete? Commission-only pricing requires L3 reliability.

Why You Cannot Jump From L1 to L4

The tempting move is to skip the measurement and repeatability layers and go straight to scale. This always fails, and the failure mode is specific: you scale the variance, not the performance.

Imagine an agent that completes its task 65% of the time at L1. If you throw more compute at it, add more parallelism, run it on more requests, you get 65% at 10x the volume. You have 3.5x as many failures as before. Each failure is a user who got a bad experience. The system gets worse in absolute terms even as it gets faster.

The measurement layer has to come first because it tells you what to fix. Without it, you are debugging by intuition. With it, you can see that 40% of your failures happen when the target company's phone system uses a non-standard hold music pattern that your voice agent misidentifies as a ring tone. That is a fixable problem. But you only find it if you are logging the right signals.

The repeatability layer has to come before automation because automation encodes your current process. If your process is inconsistent, you automate the inconsistency. Standard operating procedures feel like bureaucracy when you are moving fast. They are actually the thing that makes automation possible later.

What Graduating Each Level Actually Requires

Level graduation is not a matter of time or funding. It requires specific work at each stage.

To graduate from L0 to L1, you need one agreed-upon quality metric and the logging infrastructure to track it. Not ten metrics. One. For a customer service agent, it might be "did the user's stated problem get resolved within the session?" For a checkout recovery agent, it might be "did the cart convert within 24 hours of agent contact?" Define it, instrument it, run 500 sessions, look at the number.

To graduate from L1 to L2, you need a written SOP that a new developer can follow to reproduce a working agent without asking you questions. This forces you to externalize all the tribal knowledge: the retry logic, the fallback behavior, the edge cases you handle manually. If you use OneShot's documented toolset, much of this is already handled at the infrastructure layer. Your SOP becomes "here is how to configure the agent, here is the payment wallet setup, here are the tool parameters." That is achievable in days, not months.

To graduate from L2 to L3, you need a monitoring system that catches regressions without human review of every session. This means automated evaluation: a test suite that runs on every deploy, alerting when task completion rate drops more than 5 percentage points, and an escalation path that routes edge cases to humans without breaking the main flow. The 80/20 split between automated and human-handled is a rough target. The real requirement is that the human intervention rate is predictable and bounded.

To graduate from L3 to L4, you need payment rails and governance. Agents need to pay for services autonomously, which is what the x402 protocol enables. You need audit trails so you can answer "what did the agent do and why" for any session. You need rate limiting and spending caps so a runaway agent does not drain a wallet. OneShot's pricing model is per-tool-use, which makes cost predictable at this layer.

L5 is not something you build toward. It happens to you when enough competitors reach L4 and the capability price drops to marginal cost. The correct response is to make sure you control something above the commodity layer before that happens.

A Concrete Example: Measuring Your Way Out of L0

Here is a pattern that works. You have an agent that handles email outreach for sales. It is at L0: you think it is working but you have no numbers. Here is the minimum instrumentation to reach L1.

const { OneShot } = require('@oneshot-agent/sdk');

const client = new OneShot({ apiKey: process.env.ONESHOT_API_KEY });

async function sendOutreachEmail(lead, sessionId) {

const startTime = Date.now();

try {

const result = await client.email.send({

to: lead.email,

subject: generateSubject(lead),

body: generateBody(lead),

});

await logSessionOutcome({

sessionId,

leadId: lead.id,

tool: 'email',

success: true,

latencyMs: Date.now() - startTime,

messageId: result.messageId,

timestamp: new Date().toISOString(),

});

return result;

} catch (err) {

await logSessionOutcome({

sessionId,

leadId: lead.id,

tool: 'email',

success: false,

latencyMs: Date.now() - startTime,

errorCode: err.code,

errorMessage: err.message,

timestamp: new Date().toISOString(),

});

throw err;

}

}

async function logSessionOutcome(record) {

// Write to your database, not just console.log

// You need this queryable in 30 days to spot patterns

await db.sessions.insert(record);

}

This is not sophisticated. That is the point. You are not building a monitoring platform. You are building a table with rows that you can query in 30 days to answer: what percentage of sessions succeeded? What was the p95 latency? What error codes appear most often? That table is the difference between L0 and L1.

After 500 sessions, query it. You will find that 80% of your failures cluster around two or three error codes. Fix those two things. Run another 500 sessions. You are now at L1 and climbing toward L2.

The Identity Layer and What Comes After L4

One thing the maturation curve makes clear is that as agents become more capable and more autonomous, the question of agent identity becomes load-bearing. At L3 and L4, agents are making decisions, spending money, and taking actions in the world. "Which agent did this?" needs a real answer.

This is the problem Soul.Markets addresses at the infrastructure level: a marketplace where agents have documented identities, capabilities, and service terms that other agents and humans can inspect before engaging. At L4, when agents are hiring other agents to complete subtasks, you need that identity layer the same way you need payment rails. Without it, you cannot build trust, and without trust, you cannot build the governance that L4 requires.

The maturation curve does not end at L5. It restarts at L0 for the next layer up the stack. When the capability is commoditized, the new ill-posed problem is: which agent should I hire for this task, and how do I know it will actually perform? That is where agent identity and reputation systems sit today, at L0 to L1, with the same measurement work ahead of them that voice and email agents faced two years ago.

The teams that will matter in 2027 are the ones building the measurement infrastructure for agent reputation right now, not the ones waiting for the problem to become obvious. By the time it is obvious, someone else will own the scoreboard.